In a word, no.

But that hasn’t halted a fierce debate that has been raging for years about how a computerised car might assess human life in an accident.

First thought up in the 1960s, the so-called ‘Trolley Problem’ is now being projected onto driverless cars, raising some rather morbid moral questions.

In it’s traditional setting, the scenario asks whether, as you see a train heading down the tracks about to run over five people, you would switch the tracks so that it kills one person instead. Is it better to kill several people by inaction, or deliberately kill one?

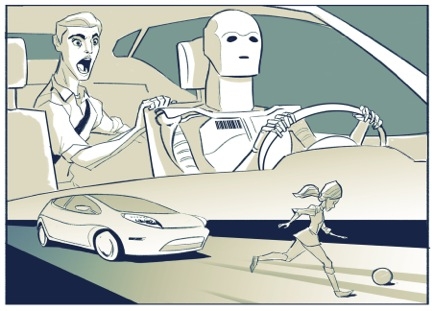

Trolley cars might be a thing of the past in most places but the hypothetical question lives on, directed now towards driverless cars. If a car is heading towards an unavoidable accident, should it be able to make a decision about who to hit, or simply hit whomever it reaches first?

The debate focuses on the decision-making powers of computers – if presented with the choice of hitting an adult or a child, a child or a dog, three adults or one, which would it choose? Should the car even be given a choice, and could it be trusted to come to the right conclusion?

Brad Templeton, director of the Electronic Frontier Foundation and self-styled ‘robotic car strategist’, runs a section of his blog dedicated to advances in driverless tech, and has written about this topic extensively in the past, questioning how important the trolley question really is.

“I don’t want to pretend that this isn’t an morbidly fascinating moral area, and it will indeed affect the law, liability and public perception,” Brad writes on his blog, Brad Ideas. “What I reject is the suggestion that this is anywhere high on the list of important issues and questions. I think it’s high on the list of questions that are interesting for philosophical class debate, but that’s not the same as reality.

“In reality, such choices are extremely rare. How often have you had to make such a decision, or heard of somebody making one? Ideal handling of such situations is difficult to decide, but there are many other issues to decide as well.”

Situations where cars would have to choose are so rare, Brad argues, that car manufacturers likely won’t even consider them for a long time to come.

“The morbid focus on the trolley problem creates, to some irony, a meta-trolley problem. If people start expressing the view that ‘we can’t deploy this technology until we have a satisfactory answer to this quandry’ then they face the reality that if the technology is indeed life-saving, then people will die through their advised inaction who could have been saved, in order to be sure to save the right people in very rare, complex situations.”

The answer to the modern trolley problem, Brad argues, is a relatively simple one. At least until scientists develop sentient, ‘alive’ AI (and we all know how that ends…), driverless cars, and computers in general, can only do what they are programmed to do. What this means is that no car will ever have to make a decision – it will already know what to do in every situation, based on some pre-set instructions from its manufacturer.

Programmers will have to set their cars up to follow the law, that’s a legal given – so we should expect every car to follow the laws of the road to the letter, in every situation. Put simply, it looks like we won’t be seeing cars swerving out of their lanes to avoid accidents, pitching onto pavements or doing any dangerous braking – they’ll be as safe and cautious and predictable as it’s possible to be.

In a way, it’s comforting to know that you’ll never be deliberately killed by a driverless car – there’s no risk of one hitting you during some dangerous manoeuvre, and one will never pull out at random to hit you.

On the other hand, it adds a grim predictability to any accident – see a driverless car hurtling towards you? You can be sure it won’t take any evasive action. Great.